Please use the script to find all duplicate files from your computer on the specific folder or path

Note: Script will find duplicate files based on its hash string value (MD5), not only based on file’ name.

You can also change the path to find duplicates over there!

## find and remove duplicate files, by hash (by file' content)!

import os

import hashlib

def hashfile(path, blocksize = 65536):

try:

afile = open(path, 'rb')

hasher = hashlib.md5()

buf = afile.read(blocksize)

while len(buf) > 0:

hasher.update(buf)

buf = afile.read(blocksize)

afile.close()

return hasher.hexdigest()

except Exception as e:

print e

def findDup(parentFolder):

# Dups in format {hash:[names]}

dups = {}

for dirName, subdirs, fileList in os.walk(parentFolder):

print('Scanning %s...' % dirName)

for filename in fileList:

# Get the path to the file

path = os.path.join(dirName, filename)

# Calculate hash

file_hash = hashfile(path)

# Add or append the file path

if file_hash in dups:

dups[file_hash].append(path)

else:

dups[file_hash] = [path]

return dups

def printResults(dict1):

results = list(filter(lambda x: len(x) > 1, dict1.values()))

if len(results) > 0:

print('Duplicates Found:')

print('The following files are same. The name could differ, but the content is same')

print('__')

for result in results:

for subresult in result:

print('%s' % subresult)

print('__')

else:

print('No duplicate files found.')

def removeDuplicates(dict1):

results = list(filter(lambda x: len(x) > 1, dict1.values()))

if len(results) > 0:

print '

'

print 'Removed Files:'

print('__')

for result in results:

for ele in result[:-1]:

os.remove(ele)

print ele

print('__')

else:

pass

if __name__ == '__main__':

dict = findDup('C:\\Users\\Administrator\\Downloads') ## you change your path here...

printResults(dict)

removeDuplicates(dict)

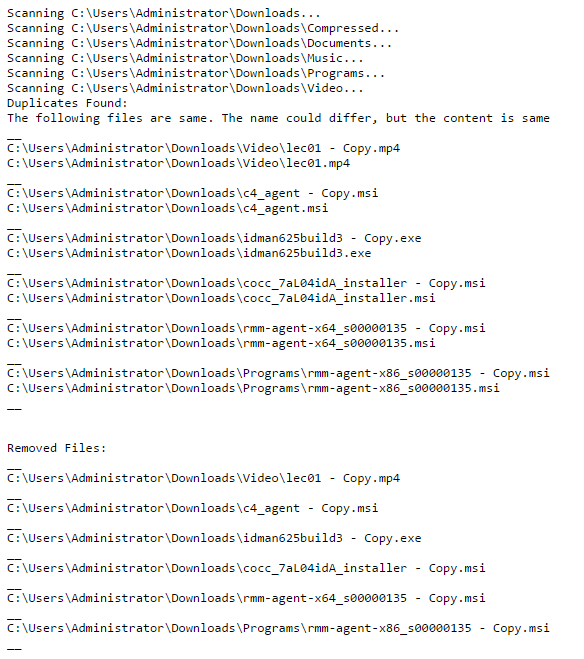

Sample Output: